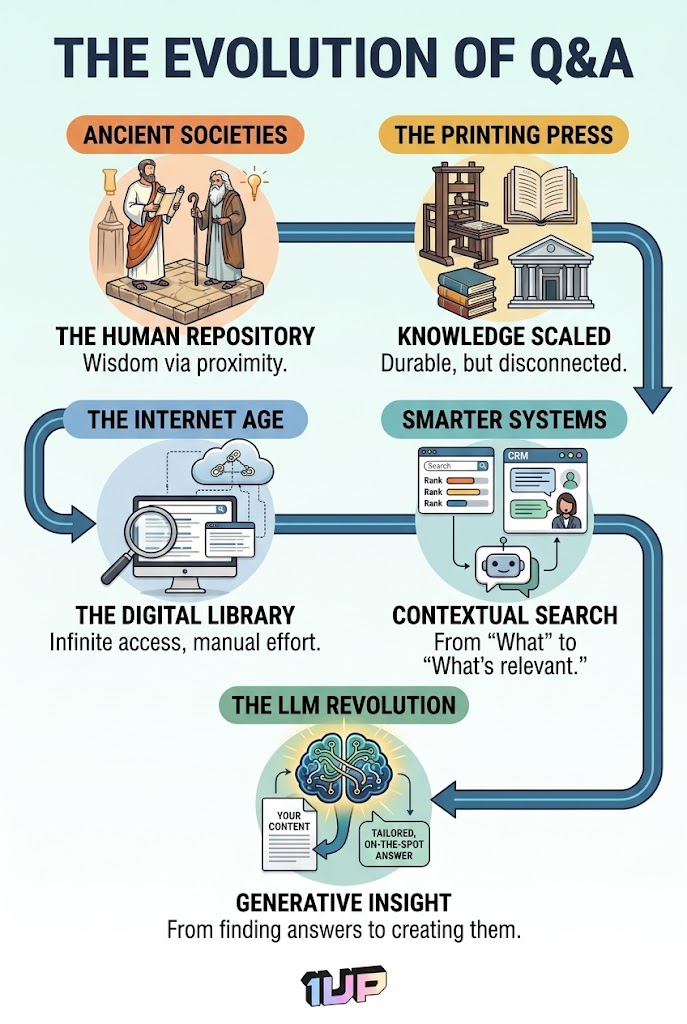

Someone has a question. Someone else has to find the right answer. That has been the basic problem for as long as people have worked together. It has not changed. What keeps changing is how we try to solve it.

From town criers, to encyclopedias, to Google to generative AI models, every generation builds new tools to bring answers from the people who have them to the people who need them.

We are in another one of those moments right now.

Answer engines powered by AI can handle thousands of questions at once, across every channel your customers and employees use. But the technology only works if the thinking behind it is solid.

Let's walk through eight things worth knowing before you build (or buy) an answer engine for your company.

A Brief History of Q&A

Knowledge Sharing Used to Be Personal

Humans have always needed answers. In ancient societies that job belonged to priests, scholars, and elders. They owned the knowledge and decided who got access to it. A master taught an apprentice. A guild protected its methods. If you were outside those relationships, the knowledge might as well not exist.

The Printing Press Changed Everything

Before Gutenberg, books were copied by hand and out of reach for most people. The printing press put the same information in front of thousands without a human intermediary. Reference books, encyclopedias, and libraries were the earliest precursors to the modern database. But the information was frozen at the moment it was printed. You could not ask for something new and get something back.

Meet… the Database

In 1966 IBM built one of the first database systems to track parts for the Apollo space program. In 1970 Edgar Codd published a paper proposing the relational database model that most databases still run on today. For the first time you could store information, connect it, filter it, and pull back exactly what you needed. That foundation is what made everything that followed possible.

The Internet Made Knowledge Freely Accessible

For 550 years the printing press was the best we had. Then the internet arrived and knowledge bases, FAQs, and help centers showed up overnight. Then Google arrived in 1998 and made it all navigable. PageRank meant the most trusted content rose to the top. But Google could only point you to the page. It could not read it and tell you the answer. The gap got smaller. It did not close because you still needed to synthesize searching for knowledge into factual answers.

Large Language Models Finally Closed the Gap (sort of)

It took about 25 years after the internet went public for AI to catch up. By the early 2020s large language models were capable enough for real business use. The difference from everything before is that LLMs do not just retrieve answers. They read your content and write a response shaped around the specific question being asked.

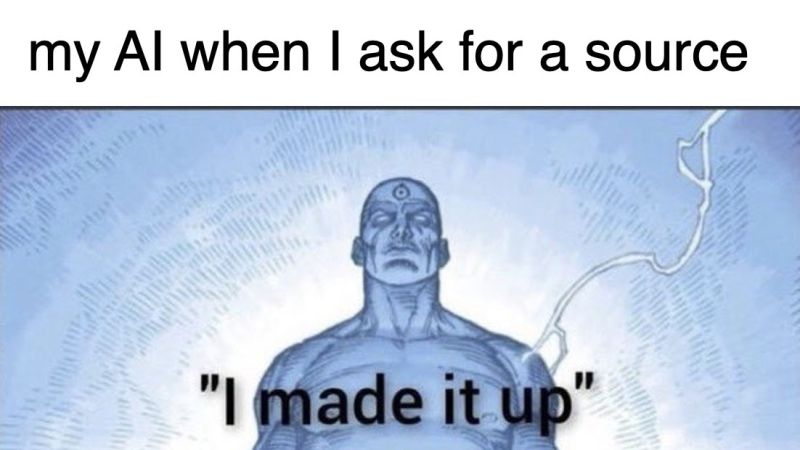

That shift from retrieval to generation is what makes modern answer engines worth taking seriously. But they are not perfect. LLMs still struggle with confidence scoring, meaning they often do not know what they do not know.

And they hallucinate, sometimes stating things that are completely wrong with total conviction. Getting the most out of them means building systems that account for those gaps, not pretending they do not exist.

It looks like the remaining gap now between good human research and AI-powered instant answers is good, verifiable, up-to-date data.

How do you Manage AI Answers at Scale?

Answer management is how companies keep track of all the answers they use for common questions.

Sounds simple right?

But it's more than just recording Q&A pairs somewhere. The real challenge is keeping them current, deciding WHAT the right answer is, identifying WHO is responsible for keeping it accurate, and pinpointing WHERE it officially lives.

It is the foundation of everything else. Before you automate anything or use AI to generate responses, you need to know what your organization's actual answers are. Who decided them, who maintains them, and where they live. Think of this as the editorial layer. The unglamorous work that makes everything else possible.

1. Create a Single Source of Truth

Our 2026 State of AI in Knowledge Management Report found that scattered knowledge is one of the biggest challenges to overcome in early answer engine rollout, 58% of respondents saying so.

We know this to be true in our daily workflows. Sales keeps answers in a shared drive. Support uses a ticketing system. Product teams document things in Confluence. When your answer engine tries to pull from all of these at once, without any clear hierarchy, users get contradictions. They get outdated information. They get confused.

The fix sounds simple: pick one place where the real answers live.A knowledge management platform, a structured content repository, or a well-maintained CMS can all work. The tool matters less than the agreement. One place governs, and everything else points back to it.

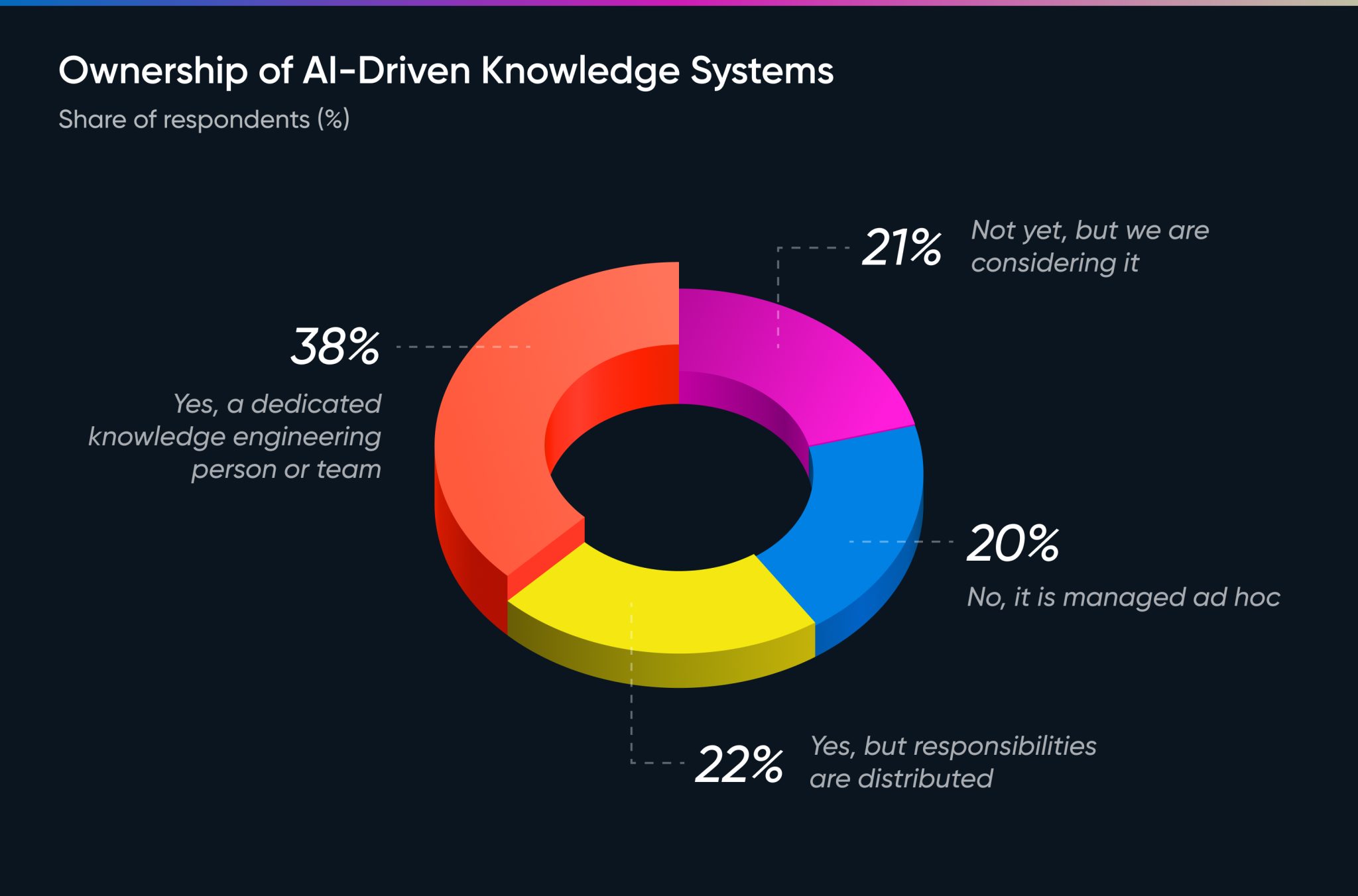

2. Assign Ownership and Mean It

Every answer needs an owner. Not a team. Not a department. One person who is responsible for keeping that answer accurate, current, and right for the audience reading it.

Our report found that only 38% of organizations have a dedicated knowledge engineering person or team. That means the majority are winging it. Companies that treat knowledge like infrastructure tend to build better products and have fewer support problems. Ownership is what keeps quality from slipping.

Enter the AI answer engineer.

An AI Answer Engineer is a role dedicated to designing and managing intelligent systems that organize and deliver company knowledge in real time. Rather than storing information statically, they teach AI to retrieve, update, and share accurate answers automatically. Embedded within the teams they support, whether sales, support, or marketing, they connect data sources, tune models for accuracy, and continuously refine the system so that knowledge becomes a living, self-improving asset tied to real business outcomes.

3. Set an SME Review Schedule

Answers go stale. Products change. A policy gets updated. A pricing tier gets retired.

An answer that was accurate in Q1 can quietly mislead someone by Q3.

The fix usually falls on subject matter experts who are already stretched thin. So make it easy. Flag answers that have not been touched in 90 days and send them directly to the right SME with one question: is this still accurate? Short focused reviews beat long quarterly audits that never happen.

Here’s a simple way to think about review cadence:

One more thing worth doing: track which content is actually being used.

In 1up, for example, you can see what sources are getting used regularly versus what has not been touched in months. That usage data tells you what to keep, what to update, and what you can safely clear out of your knowledge base entirely.

A leaner, more accurate knowledge base will always outperform a bloated one.

Answer automationis the process of using technology to deliver answers without human involvement.

A question comes in and an answer goes out. No ticket. No wait time. No back and forth. It is the layer that sits between your company knowledge base and the person asking, routing the right answer to the right place at the right time.

Done well, it makes your organization feel faster and more responsive than it would otherwise.

4. Build Guardrails To Minimize Issues

No answer engine gets everything right. There will be questions it cannot answer, edge cases it was not built for, and moments where the only right move is to hand it off to a real person. The teams that build good systems plan for those moments before they happen.

Explain Where Answers Come From

Being able to trace how an answer was generated is what separates a useful system from a black box. Every 1up response includes source citations so users can see exactly what was used to generate it. Thin or off-topic sources are a quick signal that something may need a closer look before it reaches anyone important.

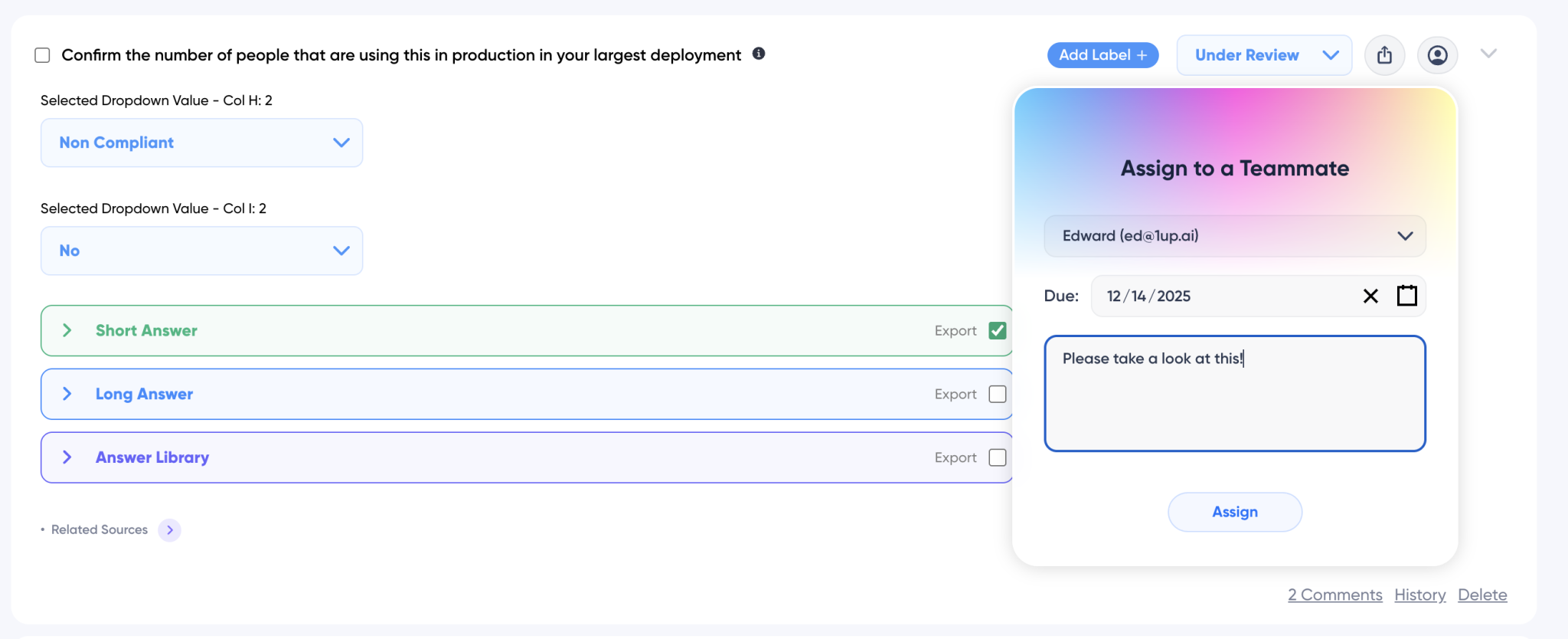

Get the Right Question to the Right Person

When an answer needs a human check, someone needs to own it. In 1up you can assign any generated answer to a subject matter expert, set a due date, and leave a note with context.

It moves to Under Review automatically and the assignee gets notified wherever they work. This helps minimize the chance of questions going unaddressed.

Let Users Tell You What Isn’t Working

Users know when an answer misses the mark. Give them a simple way to say so. In 1up, upvotes and downvotes are a quality management tool that help you keep track of which answers are holding up and which ones may need attention.

A confident wrong answer does more damage than an honest one that says I am not sure, here is who can help. Build this in from day one, not as an afterthought.

5. Extend Answers to Multiple Channels

Your answer engine probably will not live in just one place. It might run a customer-facing chatbot, power an internal HR tool, support a sales team, and feed an onboarding portal all at the same time. Each of those channels has a different audience, a different tone, and a different expectation for how detailed or how brief an answer should be.

Think about this before you build. Channel-aware logic lets the same core answer show up differently depending on where it lands. More technical for an internal IT team. More reassuring for a new customer. More concise on a mobile screen. The underlying answer stays the same. The delivery adapts.

A good example of this in practice is 1up's Slack connector. Instead of asking someone to open a separate tool, teams can tag 1up directly in any Slack channel or send it a direct message. It pulls from the same knowledge base but delivers the answer right where the conversation is already happening. No context switching. No ticket. Just an answer.

Here's how it works in 1up:

Answer generationis what most people picture when they think about AI.

Rather than pulling a pre-written response from a database, the system reads your content and composes an answer shaped around the specific question being asked. That is a meaningful jump from everything that came before it. But it is also the pillar that requires the most care.

Get it right and your answer engine feels genuinely useful. Get it wrong and it confidently tells people things that are not true.

6. Ground the AI in Your Own Data

Large language models are good at a lot of things, but they do not know your business. They do not know your pricing. They do not know your internal processes. They have not read your policies. If you deploy a generative answer engine without giving it your own content to work from, you are letting it guess. That is a real problem in any customer-facing context.

Most enterprise teams solve this with Retrieval-Augmented Generation, or RAG. The model pulls relevant content from your knowledge base before it writes a response, so what it says is grounded in what you actually know. For this to work well, your knowledge base needs to be clean, current, and well-organized.

7. Test Accuracy and Tone as Different Things

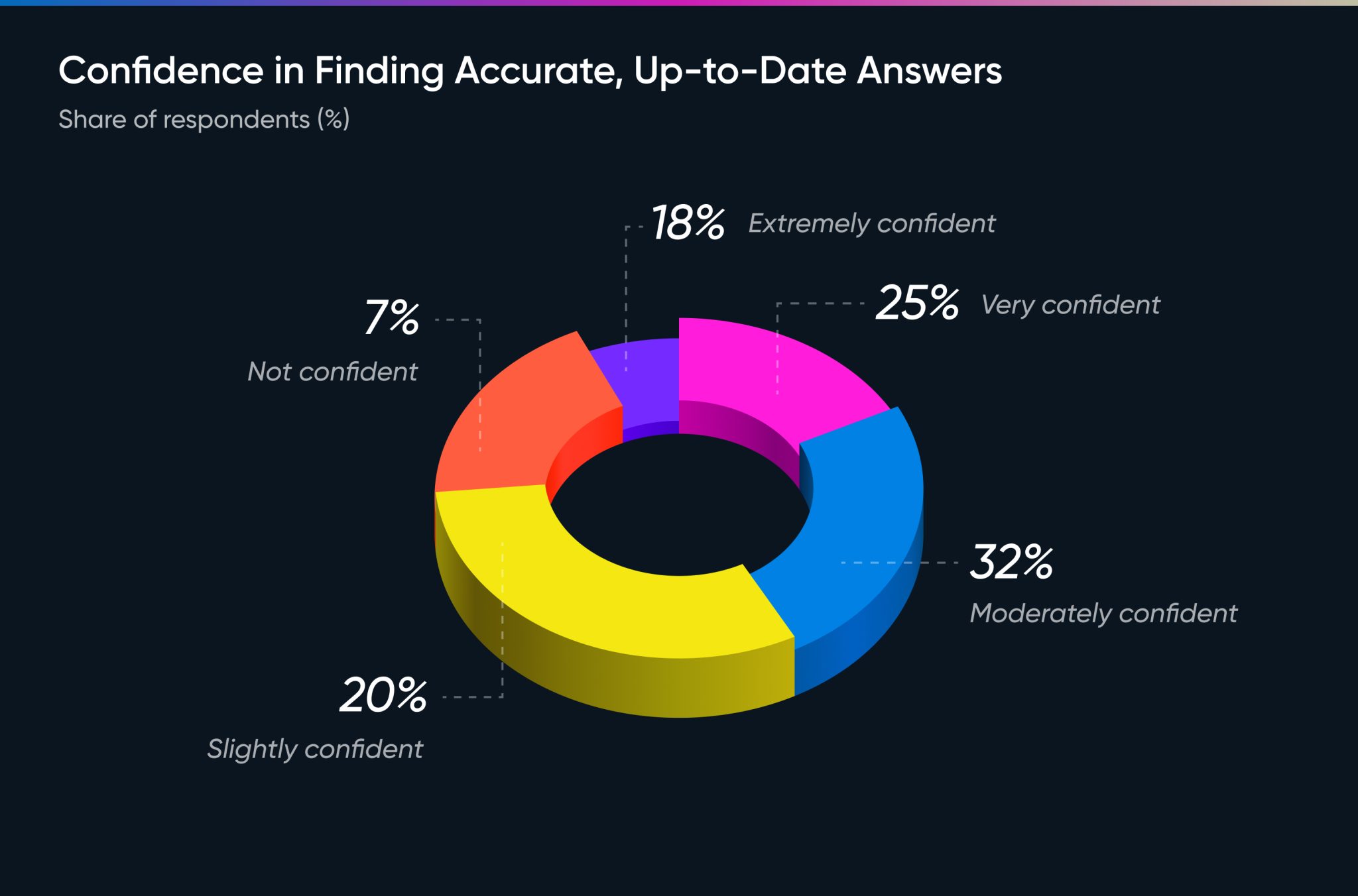

Accuracy was identified as the most crucial quality in AI by 62% of users in our research.

And yet only 25% said they were very confident in finding accurate, up to date answers when they needed one.

That gap is the problem answer generation is supposed to solve. But it introduces a new one.

There are two ways a generated answer can go wrong:

- It can be factually wrong because the underlying knowledge is flawed.

- Or it can be factually right but land in a way that feels off.

Most teams focus on the first and ignore the second. A correct answer delivered in the wrong voice can still damage trust.

Write down what your answer voice actually looks like. How formal is it? How much detail does it give? What topics does it avoid? Feed that into your system prompts and your review process. Test accuracy and tone separately. Use structured evaluation for facts. Use real people from your target audience for tone.

8. Set Up Feedback Loops on Day One

Generated answers that nobody evaluates will drift over time. A model can be subtly wrong in ways that are hard to catch unless you are actively checking. Your users are your best signal and you need a system that captures what they are telling you.

At minimum, build in the following from day one:

- Upvote/ Downvote on every generated response

- Natural language feedback fields for when a simple rating is not enough

- Regular human review of a random sample of answers

- Tracking of escalations and follow-up questions as signals that an answer missed the mark

Use what you learn to fix individual bad answers, but also to spot patterns. If the system is consistently weak in a certain area, that is worth knowing and worth fixing before it becomes a trust problem.

These 8 Tips Are Your Starting Point

The organizations getting the most out of answer engines are not always the ones using the most advanced AI. They are the ones that treated this as a real project. They worked on knowledge quality before automation. They planned for failure before they worried about scale. They built governance in from the start.

A well-built answer engine makes your organization's knowledge available, consistent, and fast at a scale no team can match alone. But that only works if users trust what they are getting back. Trust comes from reliability. Reliability comes from doing the foundational work well.

The question has not changed since people first started writing things down: how do you get the right answer to the right person?

The tools have just made it a lot more interesting.

FAQs

AI answer management is the practice of capturing, organizing, maintaining, and delivering accurate answers at scale using AI. It matters because the technology is only as good as the knowledge behind it. Without clear ownership, a single source of truth, and regular maintenance, AI systems generate inconsistent or outdated answers that quietly erode user trust.

Answer management is about getting your knowledge in order: what the right answers are, who owns them, and where they live. Answer automation is about delivering those answers without human involvement every time a question comes in. Answer generation is where AI reads your content and composes a response shaped around the specific question being asked. Each layer depends on the one before it working well.

There is no single fix but there are several things that help. Grounding the AI in your own verified data through RAG reduces hallucination. Source citations let users trace where an answer came from. Assigning questions for human review catches edge cases. And upvotes and downvotes give you a running picture of which answers are holding up and which ones need attention. The goal is to build in enough oversight that problems surface before they become trust issues.

1up your sales team

%20(1).jpg)