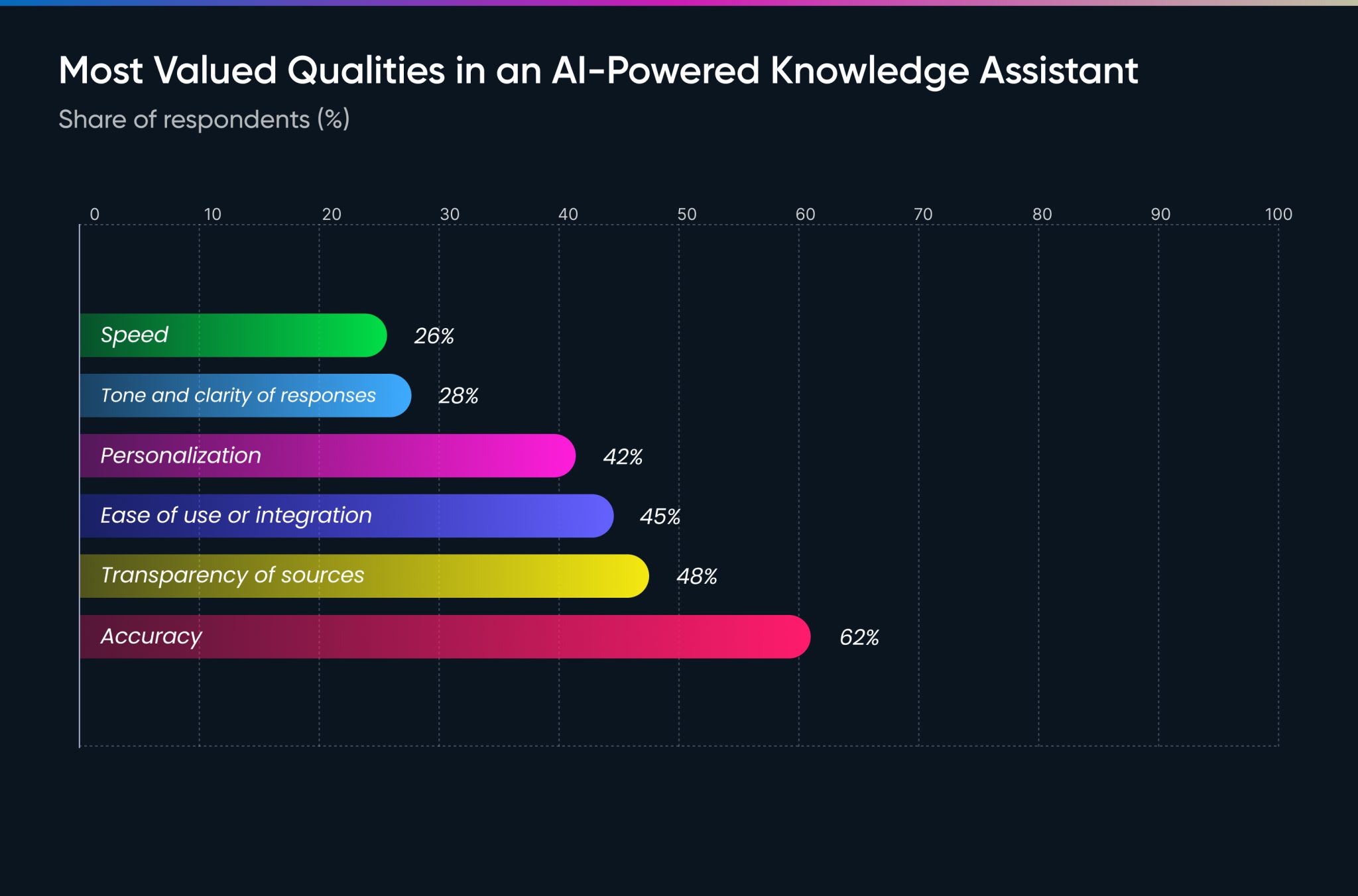

In our State of AI Knowledge Management report, we surveyed hundreds of AI users across sales, marketing, operations, finance, and HR departments. It turns out: speed is not a primary concern.

We asked users to rank the most valued qualities in an AI-Powered Knowledge Assistant. When asked about matters most in their AI outputs, users placed accuracy at the top. Speed ranked last.

Accuracy is the Primary Metric for AI Tools

The marketing narrative around AI has centered heavily around doing more faster.

The survey data does not support this as a dominant user priority. Instead, users told us:

- Accuracy of outputs is the most important factor

- Transparency of sources is a close second

- Speed, while a key marketing message of most AI tools, is not a deciding factor

Users tell us they're willing to wait longer for more accurate results. That aligns with newer model behavior that employs extended reasoning pipelines. Models may take longer to reach a conclusion but users are willing to wait if that means producing higher-quality outputs.

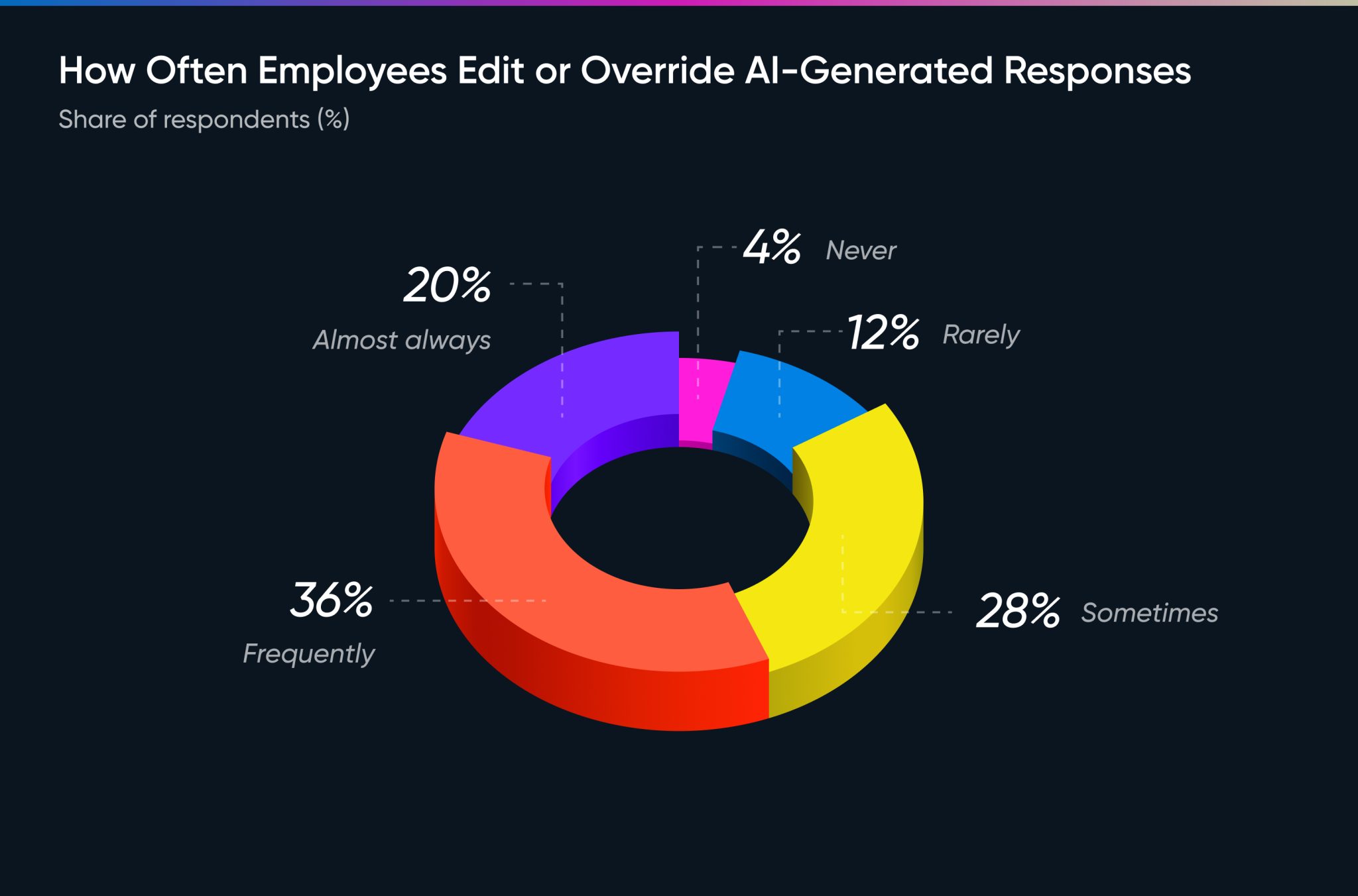

The Human-in-the-Loop is Not Going Away

We asked users whether a human curates AI outputs before use.

97% said yes.

That means only 3% of respondents are presenting AI-generated outputs without human review or revision.

Large Language Models, in their current state, are not operating autonomously in knowledge work environments. They're not trusted enough (and for good reason).

The tolerance for outputs is probably different based on the use case. For example, engineers may have a high tolerance for poor quality code because they have the ability to refine and improve it before shipping a feature. A great engineer will know if AI slop code is suitable for use and can opt out of using it.

But a newly hired marketing manager may not know have that safety net. When generating marketing content, they might not know the output they've been provided is hallucinated text claiming product features that don't exist. The risk here is high.

This seems counter-intuitive. You would expect the risk of bad code to be higher than bad marketing copy. But the nature of AI-generated code being higher risk is why developers are more vigilant about the outputs.

Bad AI Outputs Create More Work for Humans

This is where it gets messy. The data we're seeing suggests a misalignment between how AI applications are positioning themselves – speed – and what users actually value – accuracy.

We asked users how often they edit AI-generated outputs.

96% reported editing or overriding AI outputs, with more than half doing so frequently or almost always. That flies in the face of the argument for "removing workload" or reducing headcount.

{{cta}}

In fact, a Harvard Study found that AI does not reduce workload but instead intensifies it. This makes sense if you're spending more time having to validate AI output that you didn't need to before.

How are AI vendors to make sense of all this?

Perhaps we can expect marketing to shift as AI adoption matures. Tools that offer fast AI slop output will get sidelined by higher-quality reasoning systems.

Or maybe we end up overwhelmed by long answers to basic tasks/questions. Time will tell.

Sales Enablement Checklist: 12 Ways to Empower Your Reps

1up your sales team

%20(1).jpg)

.jpg)