For about a quarter of a century, the process of searching for things online has not really changed:

- You type a keyword.

- You get a list of blue links.

- Then you do the hard part. You click and you skim and you hit paywalls.

- You go back and you piece together the answer yourself.

The arrival of generative AI changed that by moving the focus from finding links to getting direct answers. For years, traditional search was the standard, but it often felt like a chore. Now people are tired of hunting for links. They just want the answer. In fact, most employees now rely on AI to get internal info on stuff like pricing, security policies, and operations.

How Traditional Search Evolved into Answer Generation

Google has been the boss for 25 years. But the link and result model is finally cracking. The internet is too full of noise. Most content is written for bots, not people. We have reached a point where we are dealing with a surplus of noise rather than a lack of information.

Then you have fragmented data. Your best answers are usually trapped in weird places. They are stuck in PDFs, Slack threads, or private databases. Traditional search bots cannot even get in there.

The numbers make this concrete. According to our 2026 State of AI in Knowledge Management report:

- 58% of professionals say information is scattered across multiple tools.

- 48% say they deal with outdated or wrong content.

- 26% say nobody is actually in charge of the information.

This fragmentation is why enterprise search is so difficult today. The old search model is failing because it stops at the finding stage. That leaves the most exhausting part, which is the actual answering, entirely on your shoulders.

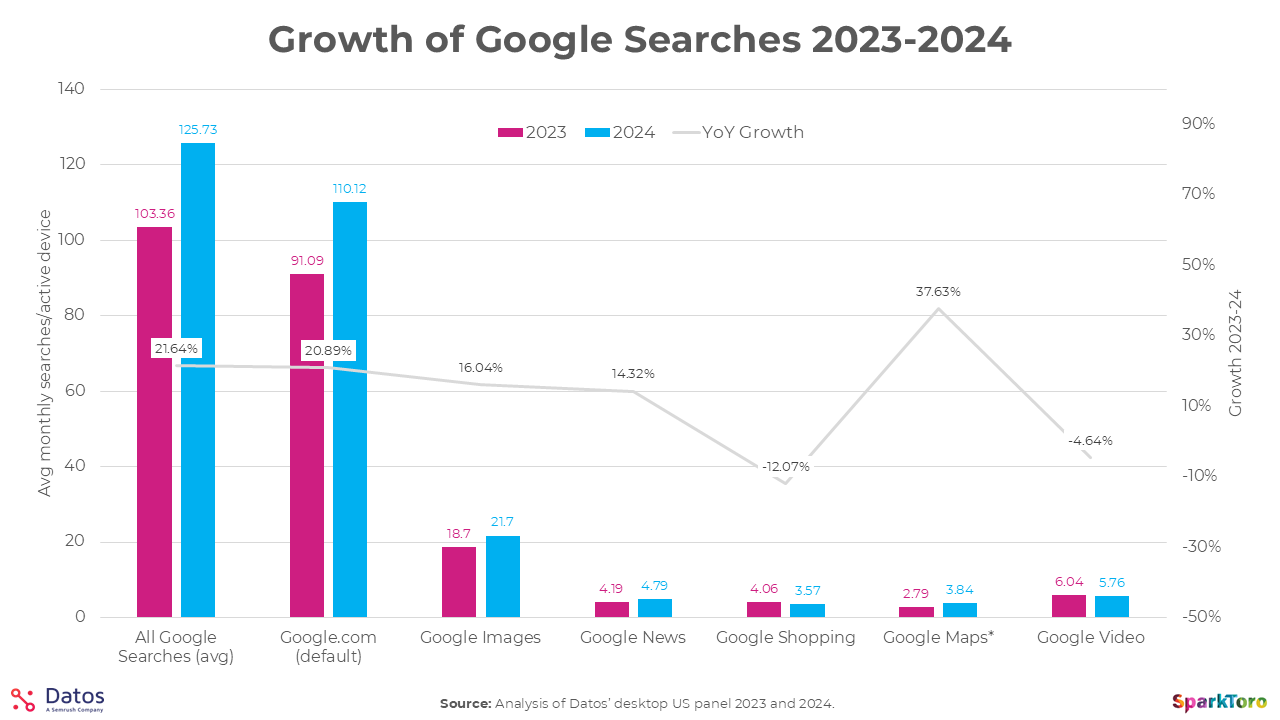

That said, search is not shrinking by any means. According to a 2024 analysis by Datos and SparkToro, average monthly Google searches per device grew by over 21% year on year, from 103 to 125 searches per active device.

Search is the foundation. Answer engines are what gets built on top of it.

No matter what you were looking for in the old model, you were always the one doing the synthesizing. The search engine found things. You figured out what they meant.

Here is what that shift actually looks like:

Generative AI systems now connect documents, policies, and approved answers into a single layer. Employees can query this information using natural language instead of just keywords. The focus stops being about where you stored the file and starts being about what you actually need to know.

What is an Answer Engine?

An answer engine is LLM-powered software that delivers answers by looking at multiple search results and sources at once.

Unlike a traditional search tool that just ranks links, an answer engine puts the information together for you. It acts like a digital researcher, taking on the role a human used to play when they had to manually search Google and piece together an answer.

Answer engines sit on top of search. You actually cannot have these systems without traditional search because their answers are often based on underlying results from engines like Google or Bing. Think of search as the tool that finds the data. The LLM is the brain that explains it and gives you a response in natural language.

Instead of you sifting through information, the model sifts through it for you and gives you a direct response.

Types of Answer Engines

There are three ways these things usually work. Each one trades off speed, accuracy, and control in a different way.

1. LLM Only (Model Answer)

This is like using the basic version of ChatGPT, Claude, or Copilot to ask a question. You might ask who the president is or what the capital of France is, and it answers from the model's own knowledge. It is delivering a generated answer based on its foundational training data.

The benefit

Speed. You get an answer as quickly as possible without the system needing to do an additional search.

The downside

You are limited by the model's training data. If it does not have the latest news, it cannot give you an accurate answer.

Lack of access

A standard model does not have knowledge of your internal systems. It cannot see your private company files because that information was never part of its training.

2. RAG (Retrieval Augmented Generation)

RAG is the professional standard for business. It is an approach where model-based answers are combined with a search of your own documents or sources. You are basically taking a very smart model and giving it a big chunk of text from your trusted sources to work with before it responds.

The benefit

The AI is given a specific set of files to read before it answers.

The result

Answers are grounded in your real data and trusted sources.

How to spot it

You can tell this is happening when you see citations in the response.

Many popular tools like Dust, 1up, or Glean use this method. Even ChatGPT does this now when it searches the internet to pull in information rather than just relying on its own data.

Getting this to work well is not easy though. Integration is often a mess because many systems are not well connected.

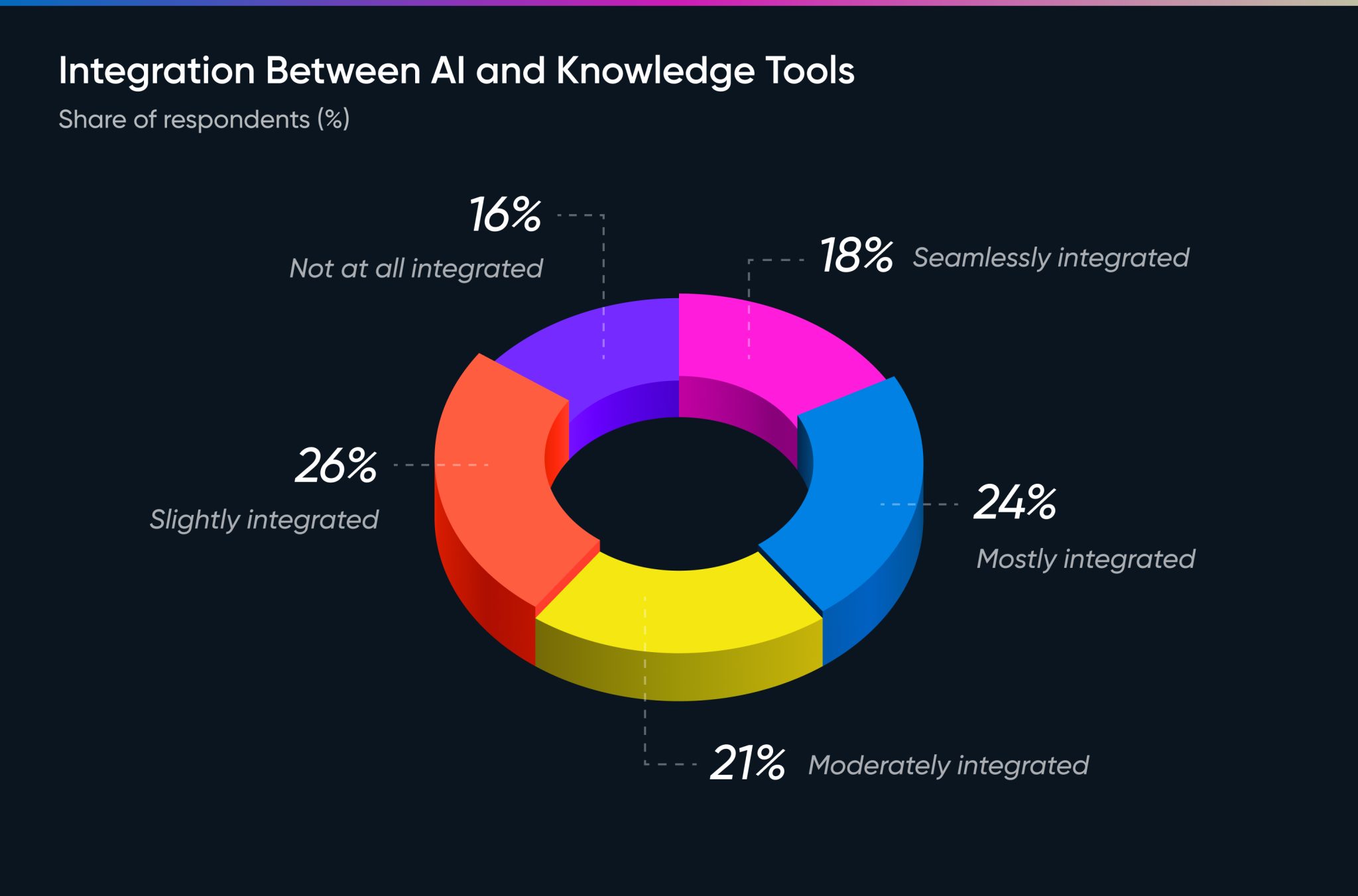

According to our report, only 18% of companies say their AI and knowledge tools are seamlessly integrated. When the AI cannot see all the right sources, employees are forced to double-check answers across different tools, which lowers trust and turns the AI into a starting point rather than a final answer.

3. Structured Answer Engine – List of Pre-Approved Responses

This type combines the intelligence of a large language model with the curated control of a human who pre-approves responses. The AI understands the intent behind your question, but it pulls the final response from a library of answers that have already been reviewed and approved.

The benefit

You get consistent and predictable answers every time.

The control

Unlike a standard RAG model where you might get ten differently phrased answers for the same question, this system gives you total control over the output.

The reliability

If ten different people ask about a refund policy, they all get the exact same verified response every time.

This approach removes the risk of AI hallucinations because the system is reading from a list of approved information that a human has already checked. By combining AI understanding with human curation, you get the speed of an assistant with the accuracy of a human expert.

What are Key Differences of Search vs Answer Engines?

Understanding the shift to answer engines means looking at who is doing the heavy lifting.

In a traditional search setup, the tool is a retriever. You type in a query and the engine ranks a list of links based on what it thinks is most relevant. From there, the responsibility is entirely on you to click, read, and review those results. You decide which information is best and then manually put those facts together into a clear answer.

Answer engines are built to be synthesizers. They use probability to look across multiple search results and documents to generate the most likely answer for you. Instead of giving you a list of places where the answer might be, they give you the response directly in natural language.

In search, you put the answer together. In an answer engine, the AI puts it together for you. Traditional search is a great way to find raw data. An answer engine is what you use when you actually need to put that data to work.

How LLMs Changed Information Retrieval

The rise of large language models changed what a good search experience even looks like. For decades, the norm was to search through results and pick the best one. Now we are seeing a shift away from simply searching through links and toward getting a direct answer.

In the past, information retrieval was a manual, multi-step process for the user:

- The Query: You entered keywords or concepts into a search box.

- The Results: You received a list of blue links and snippets.

- The Review: You had to sift through those links to find which one was most helpful.

- The Synthesis: You manually pieced together the information to answer your own question.

LLMs automated the most exhausting parts of that cycle. Instead of making you act as the researcher, the model works through the data and puts together a response for you. It uses probability to generate the most likely answer based on the information it retrieved.

By connecting documents, policies, and approved answers into a single searchable layer, LLMs let employees interact with company knowledge using natural language. You do not have to guess file names or navigate complex folder structures. The model handles the complexity behind the scenes and delivers a finished answer directly.

The Cost of Making Users "Do the Work"

When organizations stick to traditional search, they are essentially asking their employees to act as part-time researchers. Every minute spent toggling between Slack, Drive, and Notion is a minute lost to actual work. And making users synthesize their own answers does not just waste time. It creates a culture of second-guessing that prevents teams from moving with confidence.

Sales leaders, for instance, often have to add extra review cycles because they cannot find a definitive, up-to-date answer quickly. Support agents give inconsistent responses because they are each pulling from different sources. Security teams spend hours manually cross-referencing documents that an answer engine could surface in seconds.

What the Data Says about Answer Generation

A 2023 Gartner survey of nearly 5,000 full-time employees found that almost half of all digital workers struggle to find the information they need to do their jobs effectively.

Nearly a third admitted to making a wrong decision because they simply could not get hold of the right information in time.

At its core, this is a knowledge management failure. Our 2026 report suggests it has not gotten any better.

Here is where the pain is showing up:

The Accuracy Gap

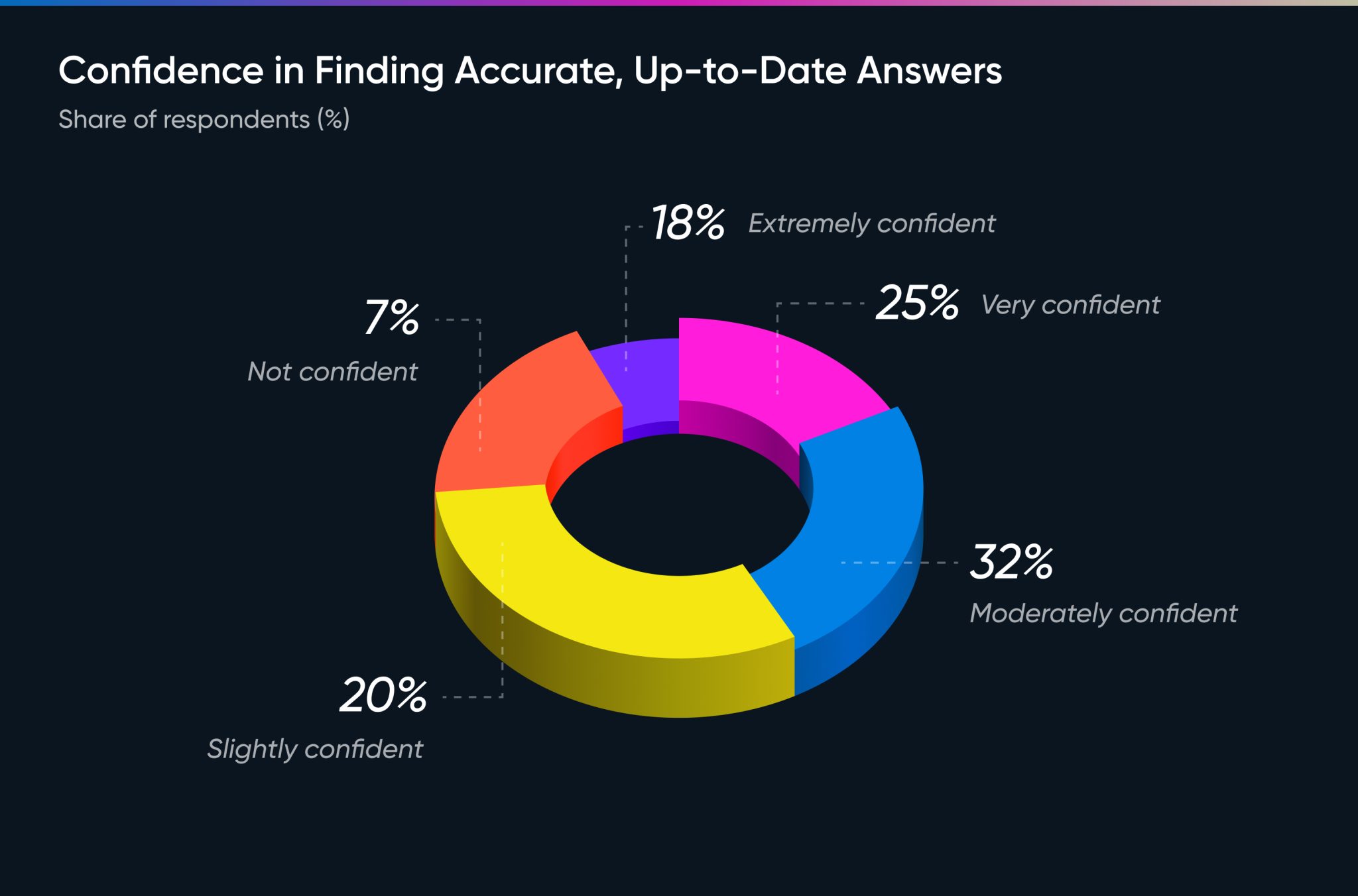

Employees value accuracy above all else, yet only 18% report feeling confident in finding accurate and up to date information.

The Ownership Void

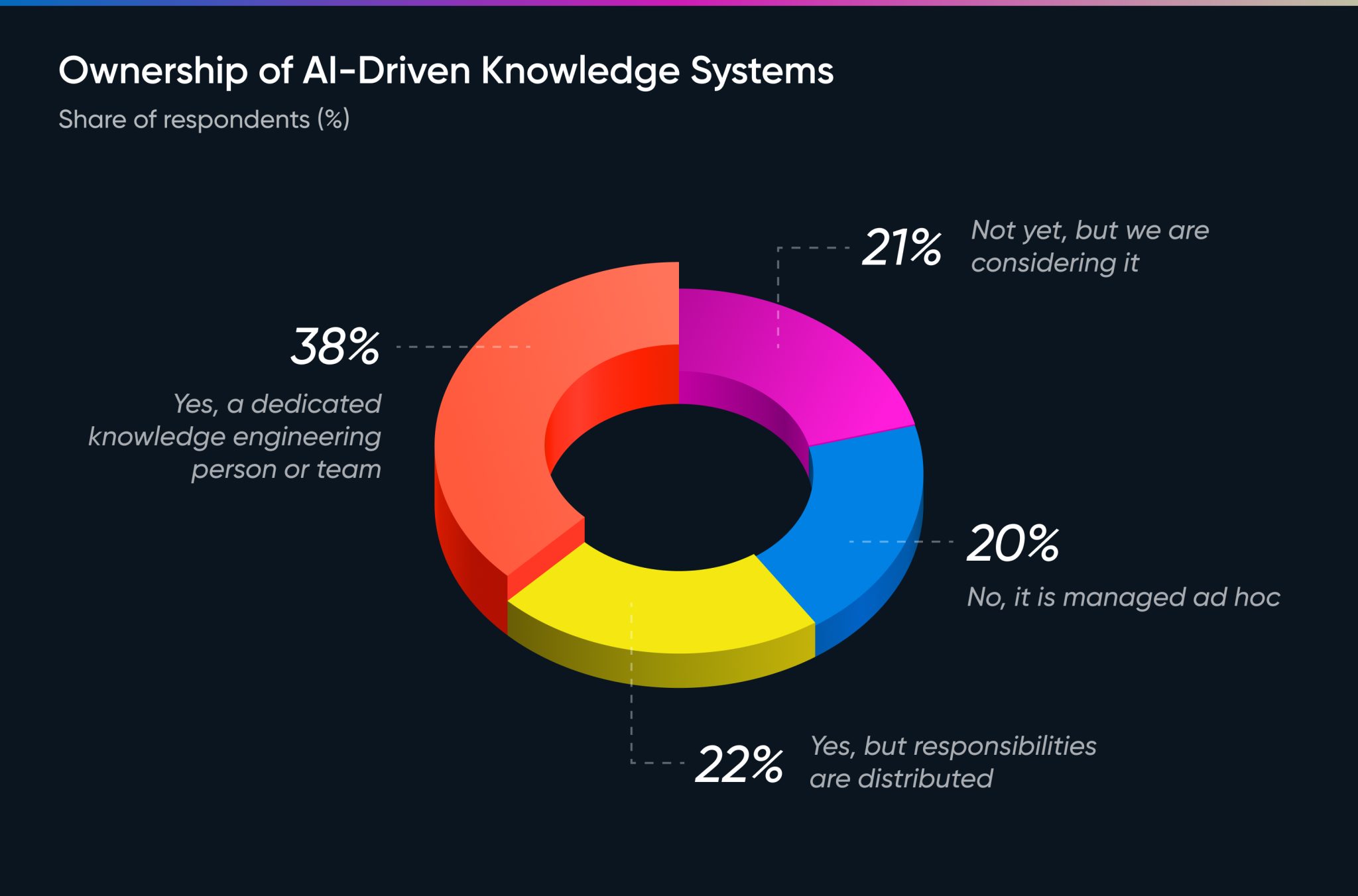

Only 38% of respondents say that there is a dedicated knowledge engineering person or team in their organization. Without a dedicated person to handle the data, it simply rots.

The Trust Gap

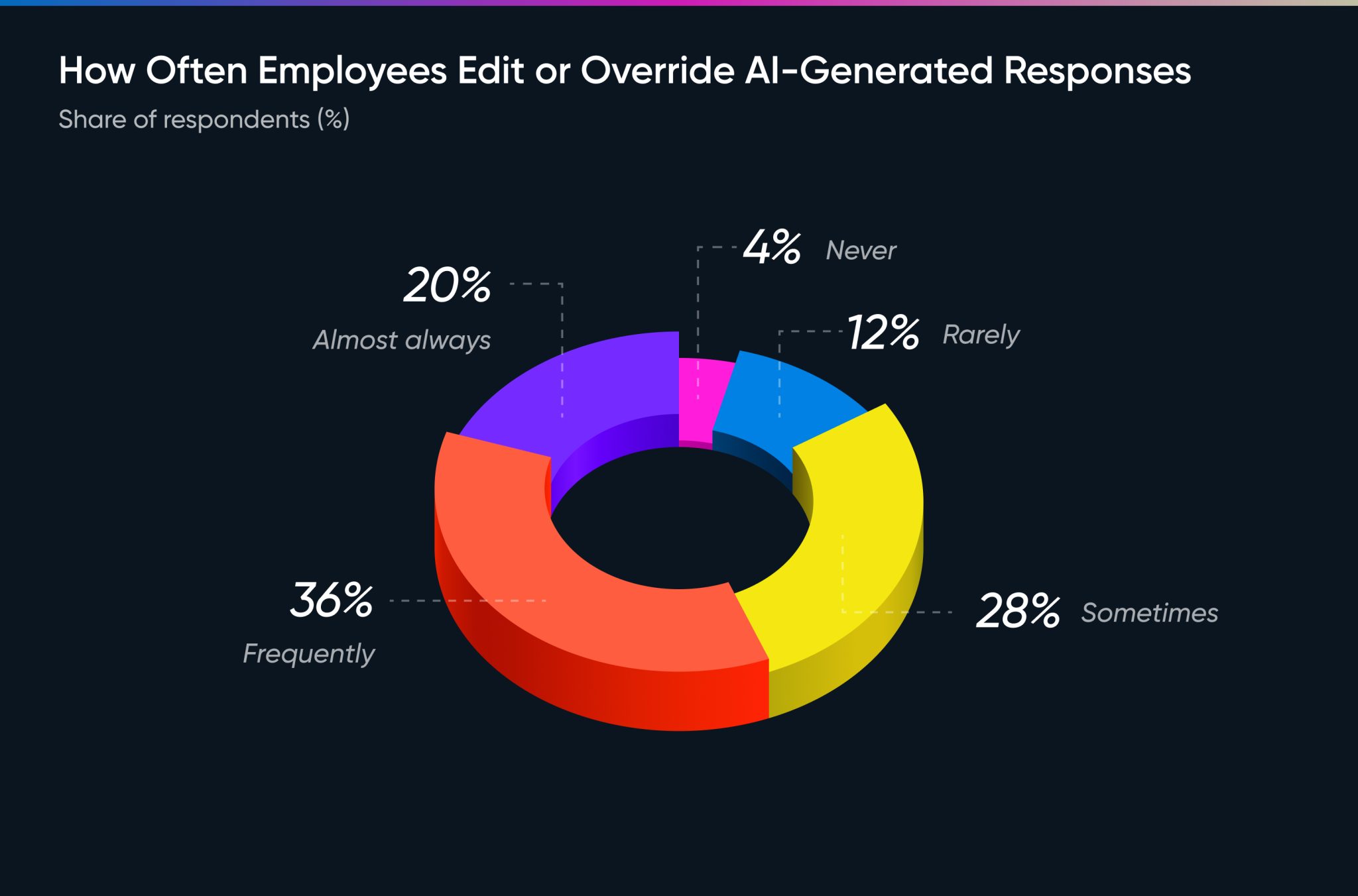

Most use AI as a drafting tool rather than a final authority. 56% of employees say they frequently or almost always edit or override AI-generated responses before using them. People are much more willing to wait for a reliable response than to get a fast one they have to second-guess.

Accuracy, Trust, and Source Control

The shift from a search engine to an answer engine is ultimately a shift in trust. In the old world of search, if a link was broken, you blamed the source. In the new world of answer engines, if the AI gives a wrong response, you blame the system. That makes accuracy the most important thing an answer engine can deliver.

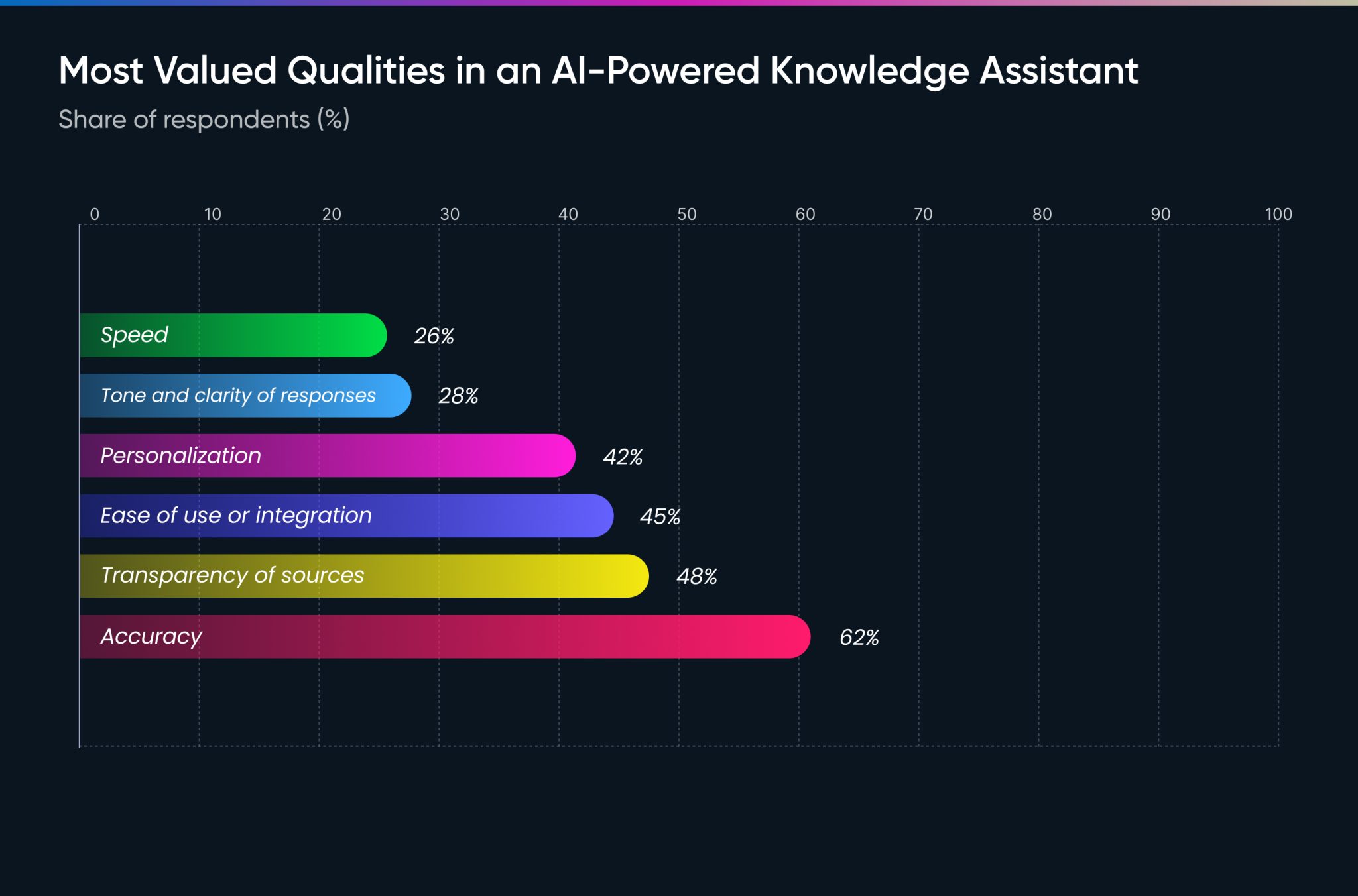

The data shows that users have already shifted their priorities away from speed:

- Accuracy is the top priority for 62% of people.

- Transparency of sources is second, at 48%.

- Speed comes last, with only 26% saying it matters most.

Because trust is still being built, there is a massive amount of manual work happening. Most employees still edit or override AI-generated responses before sending them to a customer. This is a defense mechanism. Without clear source control, users feel forced to act as a human filter because the system has not yet proven it can be left unattended.

To move past this, answer engines need to show their work. This is why RAG and structured answer engines are becoming the standard. When an AI provides a direct citation to a specific document or policy, the trust gap starts to close. Trust is built by making the AI accountable to the source data, not by making it sound more confident.

How Answer Engines Are Being Used in Sales, Support, and Security

The move from finding links to getting answers is not just a technical change. It is a functional one that is already changing how specific teams operate. Companies are moving their expertise out of static PDFs and into the hands of the people who need it most.

Sales: Closing the Knowledge Gap

In a high-pressure sales environment, speed and accuracy are everything. A salesperson often needs to answer complex technical or pricing questions while on a live call with a prospect. Before answer engines, they had to pause the conversation, search through a giant repository, or wait for a Slack response from a product expert.

Now, answer engines let sales teams query their entire library of case studies, battlecards, and CRM data in seconds. This removes the treasure hunt and lets the salesperson stay focused on the relationship rather than the research.

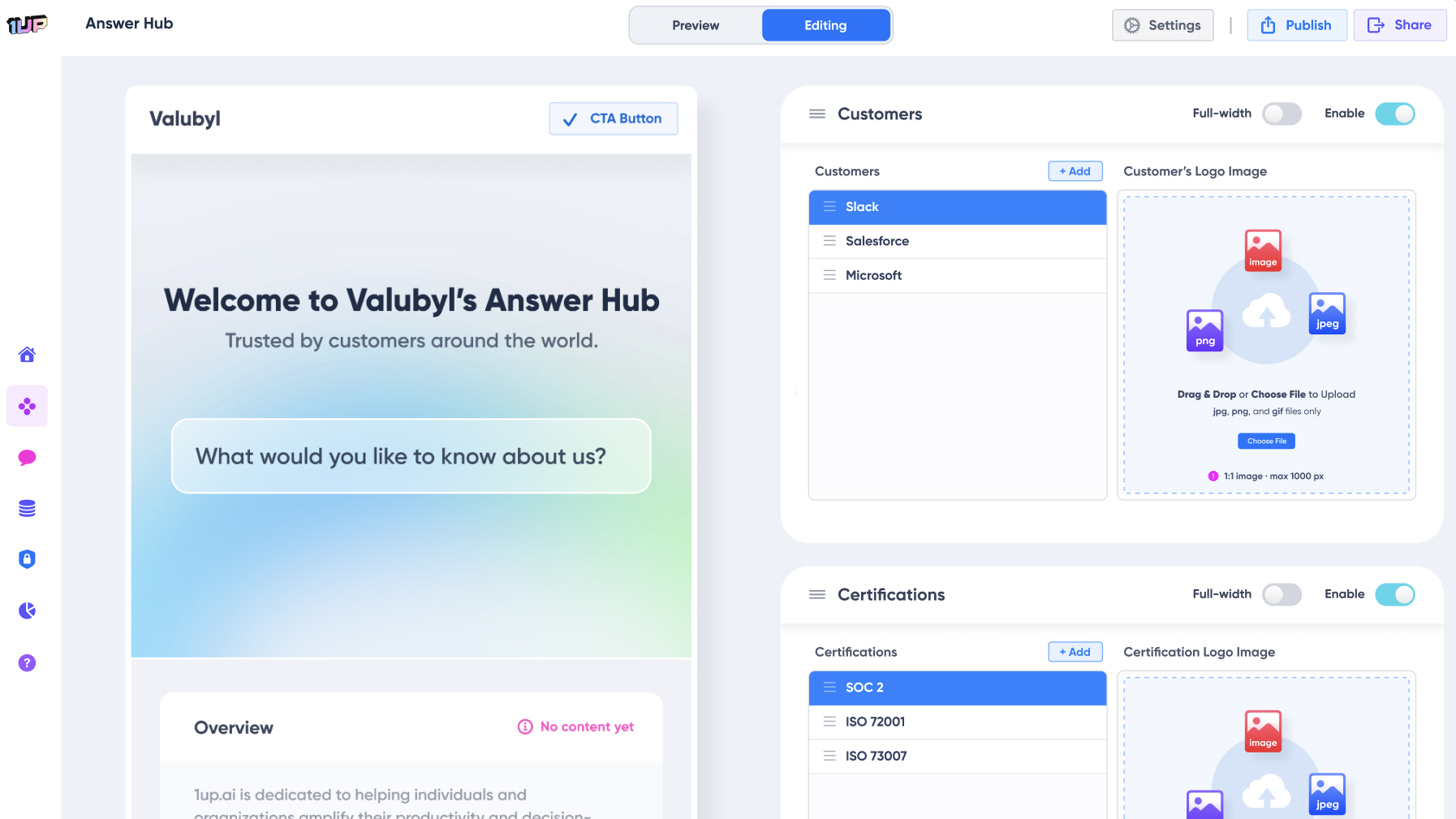

Tool example: 1up lets sales teams build a verified library of answers. When a prospect asks about a specific feature or discount, the rep gives the exact pre-approved response every single time. Here’s how:

Support: Instant Resolution for Customers

Customer support has traditionally been a game of copy and paste from a help center. An answer engine turns this into a dynamic experience. Instead of a customer digging through a FAQ page, the engine puts the documentation together and gives them a direct answer.

This is especially useful for internal support teams dealing with complex policies. Instead of bothering an HR or IT manager, employees get an immediate, grounded answer about company benefits or hardware troubleshooting.

Tool example: Intercom and Pylon use answer engine logic to resolve customer queries instantly. They pull from existing documentation to give a natural language response, only looping in a human agent when the query is too complex.

Security and Compliance: Navigating the Red Tape

Security teams are often buried under massive questionnaires and compliance audits. These documents are usually hundreds of pages long and filled with highly technical content that is hard for traditional search to parse.

An answer engine can ingest these large PDFs and instantly surface the specific clause needed to prove compliance. By providing citations for every answer, the engine makes sure the team can stand behind the data during an audit instead of manually cross-referencing sources.

Tool example:Vanta and Drata use automated systems to monitor compliance, while custom RAG-based answer engines are frequently used to automate responses to complex security questionnaires by pulling from a company's historical security data.

Why Knowledge Ownership Matters More Now

The move toward answer engines has changed the stakes of knowledge management. In the past, if a company wiki was out of date, it was a minor annoyance because the human reading it could usually spot the error. But when an AI is the one reading your data to generate a response, the quality of that data becomes a critical dependency.

Our report found that 65% of respondents say human curation and governance of AI knowledge systems is very or critically important. And yet 20% of organizations are still managing their knowledge ad hoc with no one officially in charge. Another 22% say responsibilities are just distributed across the team with no clear owner.

That gap is where hallucinations and outdated answers come from. Without a dedicated person managing the knowledge layer, the AI begins to rot as company policies and prices change.

Meet the AI Answer Engineer

We used to have knowledge managers who spent weeks building folders and tagging files. But these systems often failed because nobody used them, the content got old, and everyone eventually gave up. Traditional knowledge management was a static process that never really scaled.

The AI Answer Engineer is a different kind of role. This person does not just archive stuff. They make the information move. They bridge the gap between human expertise and machine intelligence by ensuring company knowledge actually works for the team.

The Answer Engineer has a few main jobs:

- Connecting data sources: They integrate systems across departments so the AI has access to accurate, up-to-date information.

- Defining retrieval logic: They decide which documents the AI should prioritize when answering a specific type of question.

- Calibrating for accuracy: They monitor responses and grade them to make sure the machine is interpreting company knowledge correctly.

- Optimizing for AI readability: They set standards for how internal documents should be written so an LLM can easily parse and use them.

More teams are starting to understand that an answer engine is only as smart as the person managing its sources. By treating knowledge as an engineering problem rather than a filing problem, these teams keep their AI a reliable asset rather than a liability.

Great Answers Start With Good Knowledge

Search was always about locating information. Answer engines are about delivering it. That is a meaningful difference, and it changes what good knowledge management actually looks like.

The tools are already out there. Most teams are already using some version of an answer engine, whether they call it that or not. The question is whether anyone is making sure it is working correctly. Because an answer engine that pulls from bad sources, or from sources nobody is maintaining, will give bad answers at scale. And it will do so confidently.

That is why knowledge ownership matters more now than it ever did before. The AI Answer Engineer is the person who keeps the whole thing honest. Without that role, you do not have an answer engine. You just have a very fast way to spread the wrong information.

FAQs

An answer engine is LLM-powered software that delivers answers by pulling from multiple sources at once, synthesizing the information rather than returning a ranked list of links.

In traditional search, the tool retrieves and ranks links and the responsibility for reading, comparing, and synthesizing falls entirely on the user. Answer engines flip this by using AI to do that synthesis and deliver the answer directly in natural language.

Without a dedicated person managing the knowledge layer, AI responses degrade over time as company policies and prices change, making hallucinations and outdated answers inevitable.

1up your sales team

%20(1).jpg)

.jpg)