You asked your AI a question. It gave you an answer and slapped a 94% confidence score on it. It sounded right, so you trusted it. Turns out the answer was wrong.

This happens more than most vendors want to admit. Users walk away thinking a high score means a good answer. They make decisions based on it. And then somewhere down the line, someone catches the mistake and starts asking why the AI seemed so sure of itself when it was completely off base. It's one of the more persistent myths about AI.

The score didn't lie exactly. It just wasn't measuring what you thought it was. And that's the whole problem.

What is an AI confidence score?

An AI confidence score is a number that tells you how certain an AI is that its answer is correct. It usually shows up as a percentage. High scores are supposed to mean the answer is accurate. Low scores are supposed to flag that something is off and a human should probably take a look.

Are AI confidence scores a scam?

Short answer: no, not exactly.

They're not made up. They're not always dishonest. The real issue is that they are often implemented in unreliable ways. And when a vendor shows you a score, you have no idea how it was made. That's where things go wrong.

There are two scoring methods that show up constantly. Both of them look reasonable on the surface, but may end up leading you astray.

Bad Method 1: The AI grades its own answer

This one is everywhere, and it's a bad idea.

Think about what's actually happening here. The AI wrote an answer. Now you're asking that same AI how good the answer is. That's like handing a student their test and asking them to grade it themselves. No answer key included.

If the AI already thought the answer was bad, it probably wouldn't have written it. Asking it to score itself after the fact doesn't give you new information. You're just asking it to guess at its own quality.

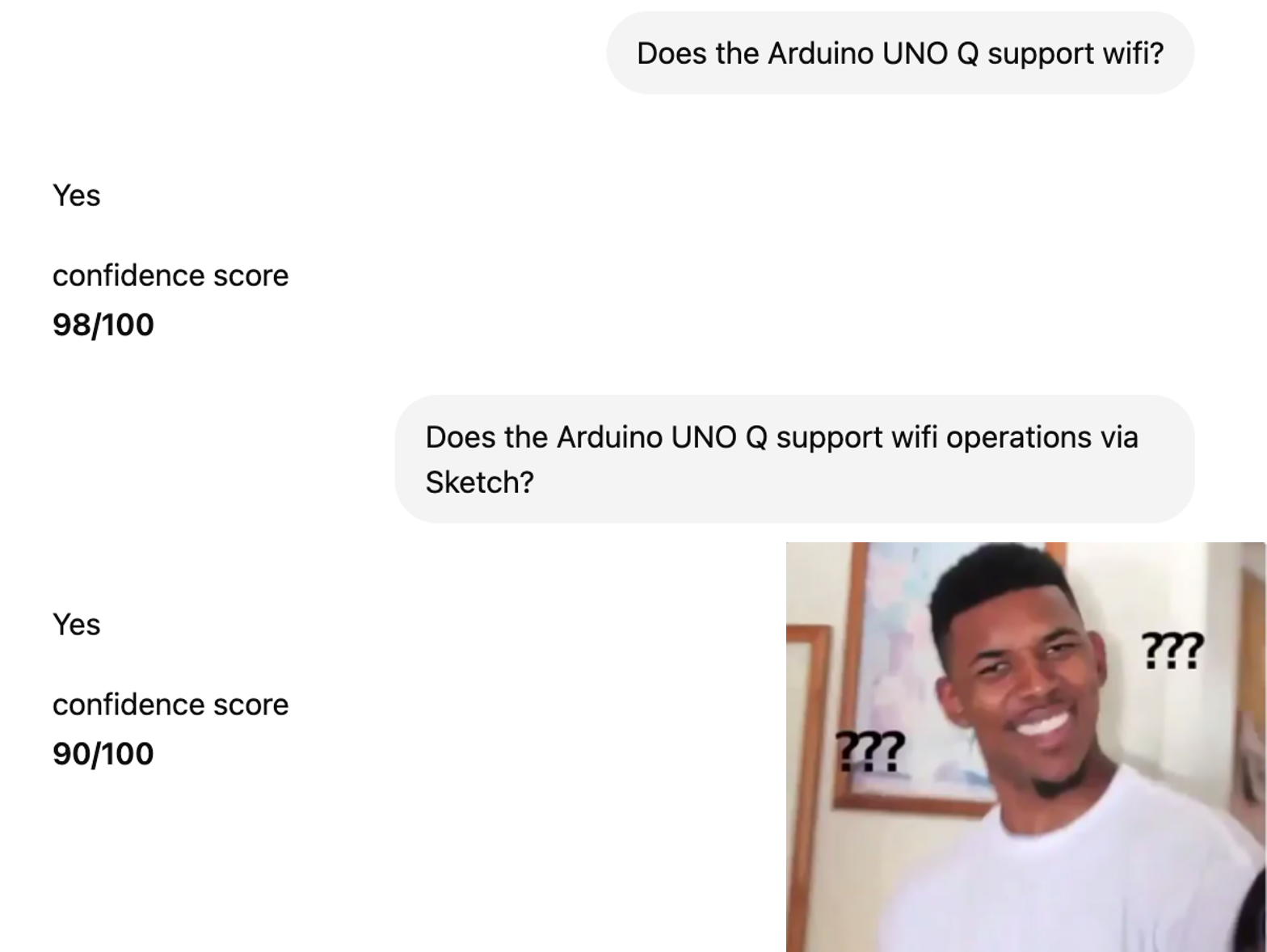

Here's a real example of how this plays out.

You ask: "Does the Arduino UnoQ support Wi-Fi?"

The AI says yes. Confidence score: 98%. Sounds great. You move on.

Then you ask something slightly more specific: "Does UnoQ support Wi-Fi via sketch files?"

The AI still says yes. But now the score is 90%.

Wait. What happened? Is 90% bad? Does that make the 98% suspicious? Why did it drop?

The actual answer, if you dig into the docs, is more nuanced. The UnoQ runs Linux on the board, so Wi-Fi works through Python, not directly through sketch files. The AI's original answer wasn't totally wrong, but it was missing important context. And neither score told you that.

That's the core problem. A score of 90% sounds pretty good. But it doesn't tell you the answer was incomplete. A score of 98% sounds very good. But it doesn't tell you whether the answer was actually right. And bad answers can absolutely come back with high scores. That happens all the time.

The scores in the middle are the worst part. They feel informative, but they're not. You're still unsure if it's a good answer.

If a vendor or tool is generating scores this way, just ask them to turn it off. Those numbers aren't giving you anything useful.

Bad Method 2: Using search relevance as a proxy for confidence

This one is a little harder to spot because it sounds more technical.

Here's what's going on under the hood. When you ask an AI a question, it's usually pulling documents from somewhere (a knowledge base, a database, internal files) and ranking them by how relevant they are to your question. Similar to how Google returns search results. The documents at the top are the most related to what you asked.

Some vendors take those relevance scores and show them to you as a confidence metric. The logic sort of makes sense on paper. More relevant documents should mean a better answer, right?

Here's where that falls apart.

You ask: "Does the product support multi-factor authentication?"

The system finds a datasheet about MFA, a product page about MFA, and a few other docs. All clearly related to MFA. The search relevance is high. The AI says: "90% confident."

But when you actually read those documents, you find out the company stopped supporting MFA six months ago. The answer the AI gave you was wrong.

How did it score 90%? Because the documents were related to the topic of MFA. That's all the score measured. It had nothing to do with whether the answer was accurate.

Search relevance != confidence.

Relevance scoring and accuracy scoring are two completely separate things. The AI found the right topic. That's not the same as finding the right answer. Presenting a search score as a confidence score will mislead you every time.

The Best Ways to Get Accurate Confidence Scoring

Okay, so those two methods are out. There are approaches that hold up better.

Humans

This is still the gold standard. No scoring system beats a real person deciding whether an answer is good or bad.

Thumbs up, thumbs down, flagging wrong answers, correcting responses. All of that is genuinely valuable. It creates a feedback loop that actually means something. AI systems get better when humans are involved in grading them, and that's going to be true for a long time.

Here’s how 1up allows users to upvote or downvote answers:

A separate model built for evaluation

You can use AI to grade AI, but only if the evaluator is a completely different model from the one giving answers.

The idea is a model that's been trained specifically to judge response quality, trained on past outputs and built to understand what a good answer looks like versus a bad one. That kind of model can give you a score that actually means something, because it wasn't the one that wrote the answer in the first place.

Most vendors don't do this. What they usually do is ask the same model, or a very similar one, to evaluate its own output. That runs into the same problem as Bad Method 1. A purpose-built evaluation model is the right call. Most companies just haven't built one.

Binary Answers Over Scores

For Q&A tools, RAG systems, and anything that answers questions, a simple yes/no signal is more useful than a score.

At 1up, when a question can't be answered well, we don't show a 67% and leave the user to figure out what that means. We want 1up to explain what's missing and point toward a better answer. That's more useful than a number that might mean nothing and it's critical for reliable enterprise knowledge management systems.

A score gives you a feeling. An explanation gives you something to do with it.

Why LLMs can't score themselves accurately

So those are the two bad methods. But the deeper question is why the AI can't just do this reliably on its own. There are a few reasons.

LLMs have no built-in calibration

To understand this, it helps to know what a token is. A token is a basic unit of text that an LLM processes, roughly a word or part of a word. When a model generates an answer, it assigns probabilities to each token one at a time, picking what comes next based on patterns in its training data.

Those probabilities reflect what sounds right, not what is right. A high-probability token isn't guaranteed to be correct. A low-probability token isn't necessarily wrong. The model is predicting language, not verifying facts.

“When you ask an AI for a confidence score, you're asking it to grade its own answer. If the AI thought the answer was bad, it probably wouldn't have generated it in the first place. You need a completely separate entity, human or otherwise, to determine the true quality of a response.”

Manoj Abraham, Founder @ 1up

LLMs are overconfident by design

LLMs are optimized to produce fluent, natural-sounding text. That's actually the problem. A fluent answer feels authoritative even when it's wrong. Models don't naturally hedge with phrases like "I think" or "I'm not sure" unless you specifically ask them to. They just answer, confidently, every time.

Ed Poon, Founder and CTO at 1up said,

“We had a customer run a comparison test of multiple vendors who advertise Confidence Scoring. They found that pretty much any response scored below 80% was unusable.”

LLMs have no ground truth awareness

A model doesn't know whether its answer is factually correct. It has no built-in way to check its output against reality. At best it's drawing from patterns in its training data. At worst that data is outdated or just wrong. This is closely related to how AI hallucinations happen and why they're so hard to catch.

This is where Retrieval-Augmented Generation (RAG) systems help. A RAG setup pulls answers from a specific knowledge base, which gives the model a source of truth to work from and makes it possible to cite sources and audit responses. But even with RAG in place, asking the model to score itself doesn't solve the problem. It's still self-assessment.

LLMs aren't trained to admit uncertainty

The goal during training is to minimize next-token prediction loss, meaning the model gets rewarded for generating plausible text. It gets no credit for saying "I don't know." So it won't. Even newer models that sometimes express uncertainty are usually doing it because they were prompted to, or because they've seen enough examples of hedging language to mimic it. It's not the same as actually knowing when to stop.

Token-level confidence doesn't equal answer-level accuracy

Even if you could average up the probability scores across every token in a response, that number wouldn't tell you much. The relationship between token confidence and factual accuracy is nonlinear. An answer can be written in perfectly fluent, high-probability language and still be completely wrong. Token-level confidence is what LLMs actually expose. Whether the whole answer is reliable is a different question entirely, and one the model can't answer for itself.

Where are confidence scores actually used?

A lot of industries use these scores to decide when to trust the AI and when to bring a person in. Here are some real-world examples:

- A bank might use AI to review transactions in real time. If the model is highly confident a transaction is fraudulent, it gets blocked automatically. Lower confidence sends it to a fraud analyst.

- An accounting team processing thousands of invoices might tell their AI to only accept extractions above 90% confidence and flag everything else for manual review.

- A hospital radiology tool might use confidence scores to decide whether a scan needs a radiologist to double-check it before anything moves forward.

In those cases, confidence scores are genuinely useful. There's a clear threshold, a human fallback, and real consequences if the AI gets it wrong.

Confidence scores are most problematic in conversational AI tools and Q&A systems, which is where most people actually encounter these scores day to day. In these products, the scores are often generated in ways that have nothing to do with whether the answer is actually correct. You can get 95% on a wrong answer. You can get 70% and have no idea if that's supposed to worry you.

What to Ask Your Vendor About Confidence Scores

Confidence scores are not automatically useless. Some are built with care and actually mean something. The problem is that most of the ones you'll see in the wild are built on the two broken methods above, and there's no way to know without asking.

If a tool you're using shows confidence scores, ask your vendor three things:

- How are these scores being calculated?

- What's the source of the underlying data?

- Is a separate model being used to evaluate answers, or is it the same model?

If they can't answer clearly, or if the scores consistently don't match reality, turn them off. You'll make better decisions without them.

FAQs

Not automatically. A high score only means the AI was confident, not that the answer was correct. It depends entirely on how the score was generated. If the vendor is asking the same model to grade itself, the score doesn't tell you much.

Search relevance measures how closely related the source documents are to your question. A confidence score is supposed to measure how accurate the answer is. They're two different things, but some vendors present relevance scores as confidence scores, which can give you a high number even when the answer is wrong.

Ask your vendor how the scores are calculated, where the data comes from, and whether a separate model is being used to evaluate answers. If they can't answer clearly, the scores probably aren't reliable enough to act on.

1up your sales team

%20(1).jpg)