A Request for Proposal (RFP) can take a long time to complete. If you've ever filled out one of these questionnaires, you know what we're talking about. And unless you're one of the many users automating RFP responses with 1up, you're probably unhappy with how tedious and painful the process is.

But what makes the response process so slow?

That's what we wanted to figure out. So we reached out to one of our customers and offered to manually complete one of their questionnaires.

For this experiment we used a simple RFP written for an enterprise software company specializing in Identity Access Management. It was a vanilla questionnaire consisting of 82 rows with a 300-word limit for answers. That means answers can be as short as "Yes" or about twice as long as this intro.

We had 1 rule: no automation. We wanted our experience to be as close to the user pain as possible in order to truly understand what makes the RFP process so slow?

Fortunately, our customer was kind enough to give us access to a knowledge base they were using for questionnaires – which would give us great content to work off. So we grabbed a Red Bull, put on a Spotify playlist, and got to work.

1. Manually writing answers +5 hours

This RFP had a total of 82 questions and a cover page.

Filling in the answers took us a little over 5 hours over the course of 2 business days. In some cases, we copy/pasted previous answers or relevant excerpts – but still needed to rephrase them to match the context of the question being asked.

Our answers ranged from approximately 20 to 300 words. We found ourselves coming up against the word limit on some of these responses. In these cases we would shorten our answer and reference external sources for more information.

So how did we do? Most humans type at 40 words per minute. Our average answer length was 142 words, so…

(80 answers * 142 words) / 40 words per minute = 284 minutes (4.7 hours)

Surprisingly that estimate is almost exactly as long as the 5 hours we spent typing out responses. That's excluding the time we spent searching for answers (more on that below). The content was unfamiliar to us so we probably took longer than our customer would in that regard.

Speaking of pencils….

As a side quest, we tried handwriting the RFP to see how long that would take.

Obviously this was a mistake. We got through 7 rows before carpal tunnel started to kick in and we gave up. 🤷🏾♀️

2. Searching for answers in different places +2 hours

Finding answers was challenging because we were dealing with several unfamiliar data sources. Our knowledge base consisted of:

- 2 previous questionnaires stored on our desktop

- 100+ support pages on a website

- 24 product docs in Confluence

- A mix of compliance content in Drata

- 50+ sales assets and solution briefs in a Google Drive folder

It took us just over 2 hours to find the right data sources needed for our responses. That's excluding any time spent typing. We measured this using an tool that tracks screen time spent in an application (finally, a good use for spyware).

The most annoying part of this process was context switching between compliance, security, product, and sales messaging. These data sources are all structured differently so it's tough to just CTRL+F the answer you're looking for.

Another issue with storing all of this great data separately was that it was often buried alongside irrelevant information. For example, one section of RFP questions had us digging through sales assets to find performance benchmarks. We assumed this information would be in the product documentation in Confluence.

We were wrong.

It's painful and repetitive

RFP questionnaires are probably the worst part of any sales process. Searching through a knowledge base of answers takes forever, and you almost always end up having to write a new response.

Mike Rosengarten, Founder & CEO, Camino

3. Navigating a knowledge management tool +2 hours

One of the tools provided to us was an RFP management system our customer had used to store FAQs and previously-answered questionnaires.

We started here because we thought that the previous questionnaires would have relevant answers we could just copy/paste. But we soon discovered that every answer we found was similar in meaning but just different enough that we had to rewrite it. The time spent in this tool was a little over 2 hours of searching for and comparing similar answers.

Going down the knowledge base rabbit hole set us back quite a bit and taught us a few things about the state of RFP response software:

- There are a ton of great knowledge management tools and methods. Unfortunately, they don't easily position you for knowledge automation as you still need to manually look up, understand, and retrieve the data you need.

- Even though this rich data was already structured, we couldn't simply copy it over to our new questionnaire. Context, phrasing, and specific answers are different. We essentially ended up regenerating answers by hand.

- Q&A isn't everything. We still needed the ability to generate one-off responses for sections like the RFP Executive Summary and legal boilerplate.

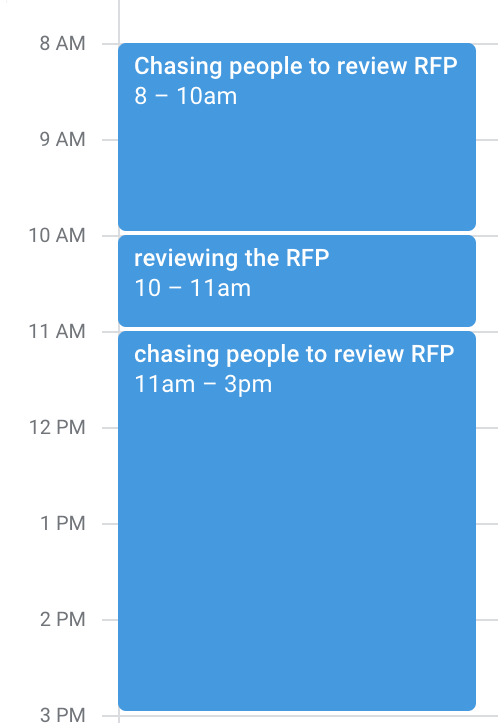

4. Chasing collaborators to review the RFP +1h per person

Validation is a key step in any RFP process, especially when it involves subject matter experts (SMEs).

After completing our portion of the questionnaire, we had a Sales Engineer and an Account Executive review it. Technically, this only took them 1 hour each in combined review time, but it actually took 2 days to get this done because we needed to wait for our colleagues' schedules to free up.

This is probably the worst part of the process because it was out of our control.

It's tough to plan around people's schedules and in this case we think we got lucky. An RFP can involve 3 or more people, requiring you to juggle calendars for your sales, product, IT, compliance, and sales engineering teams.

Our calendar ended up looking something like this:

5. Final review of grammar, punctuation, and sanitization +1h

Alright, so this took less time than we expected. According to Google, the average proofreading process could take ~50 words per minute, so in our case we expected to run through our 11,000 word response in about 3.5 hours.

We knocked it out of the park.

It took us about 45 minutes to do a complete review, cleaning up any grammar and spelling issues along the way. Spellcheck did much of the work, but it wasn't able to understand most of the tech jargon, product names, and acronyms we used in our responses.

Between searching, writing, collaborating with teammates, and reviewing the final product, we 'landed the plane' with 12 hours of physical work and a turnaround time of 3 days.

Our experience completing this RFP manually was painful and we never want to do it again. We gained a newfound appreciation for the teams who do this on a daily basis.

How we cut time spent on this RFP from 3 days to < 1 hour

The response process is a grind. It takes patience. It feels like a marathon, but you're also in a race against competitors. Automating it creates a huge competitive advantage, so let's see how we can do that.

As a next step, we cleared out the answer column and uploaded our questionnaire into 1up. Here's how were able to condense time spent on each step of the process:

- Manually writing in answers

1up generates answers for us so we don't need to write them in manually. - Searching for responses in different places

1up searches our vast knowledge base for the best documents and data sources so we don't have to. - Navigating a legacy RFP management tool

There's no need to sift through previous question/answer pairs. 1up is generating new answers on the fly. - Chasing collaborators to review the RFP

We no longer need subject matter experts to rewrite the answers. We can loop them in at any time for a stamp of approval. - Final review of grammar, punctuation, and sanitization

We no longer worry about polish, answer quality, or data source hygiene. Our questionnaire came back with perfect spelling and grammar. Even poor data sources were corrected using 1up's automated RFP proofreading capability.

With 1up, our total time spent on the same RFP: 37 minutes.

Getting this process down from multiple business days to just under an hour felt good.

Here's what that looks like:

1up your sales team